I. Introduction: The 2026 AI Infrastructure Reality

If you are building an AI startup in 2026, your biggest expense is not office rent, marketing, or even salaries. It is compute.

Machine learning today is not about basic servers. It is about massive GPU clusters running around the clock. One wrong infrastructure decision can double your burn rate. And for startups, burn rate determines survival.

The global cloud infrastructure market has entered an extreme growth phase because of generative AI. In 2026 alone, the five largest technology companies — Amazon, Microsoft, Google, Meta, and Oracle — are projected to spend around $600 billion in capital expenditures. That is a 30%+ jump compared to just a year ago.

What is even more important is where this money is going. Roughly three-quarters of that spending is dedicated directly to AI infrastructure. That means building new data centers, installing advanced liquid cooling systems, and buying tens of thousands of Nvidia GPUs.

This is not just IT expansion. This is an infrastructure arms race.

For AI founders, the question is no longer “Which cloud provider is popular?” The question is: “Which cloud infrastructure gives me the most tokens per dollar?”

Because in 2026, compute equals survival.

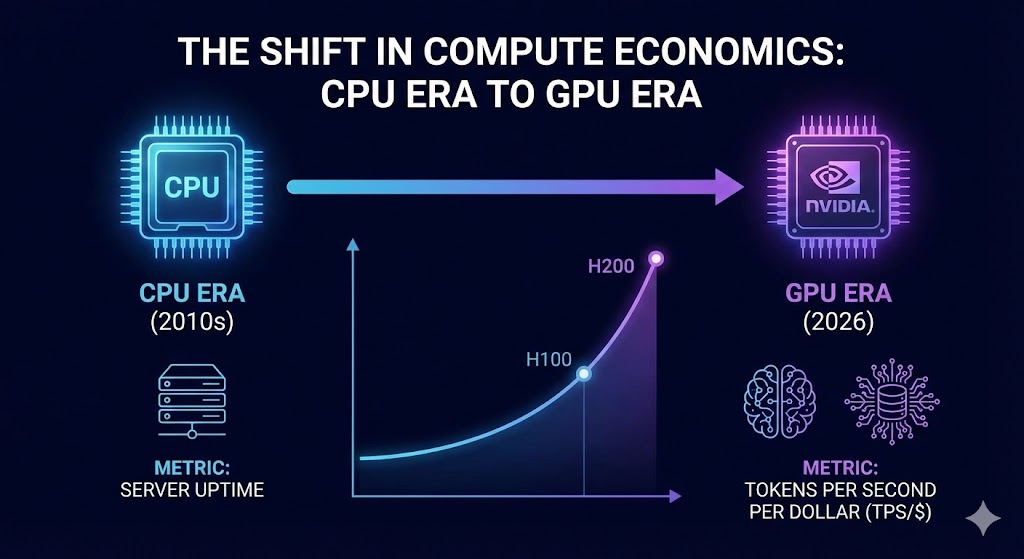

II. Historical Context: The Shift in Compute Economics

To understand today’s environment, we need to go back a decade.

In the 2010s, cloud hosting decisions were simple. Companies evaluated providers based on uptime, CPU availability, and storage pricing. Web traffic scaling and database reliability were the key concerns.

Then came generative AI.

Large language models and multimodal systems completely changed the economics of computing. CPUs were no longer enough. GPUs became the center of gravity.

The Nvidia A100 became the backbone of early generative AI breakthroughs. It enabled faster training of transformer-based models. But as models grew larger — billions, then hundreds of billions of parameters — even the A100 started hitting bottlenecks.

The Nvidia H100 changed the game with higher memory bandwidth and stronger tensor cores. The H200 improved on that with even greater memory capacity, allowing larger context windows for advanced AI models.

Now, the upcoming Blackwell B200 and B300 architectures are being designed specifically to handle trillion-parameter models with fewer nodes. That means fewer interconnect delays and better performance scaling.

But performance is not the only metric that matters.

In 2026, serious AI teams measure success in “Tokens Per Second per Dollar” (TPS/$). It is not about raw speed anymore. It is about cost efficiency. If your infrastructure produces 30% more output for the same dollar spend, that difference compounds dramatically over months.

This shift from CPU-era thinking to GPU-era efficiency has changed how startups and enterprises evaluate cloud hosting.

Blackwell Architecture

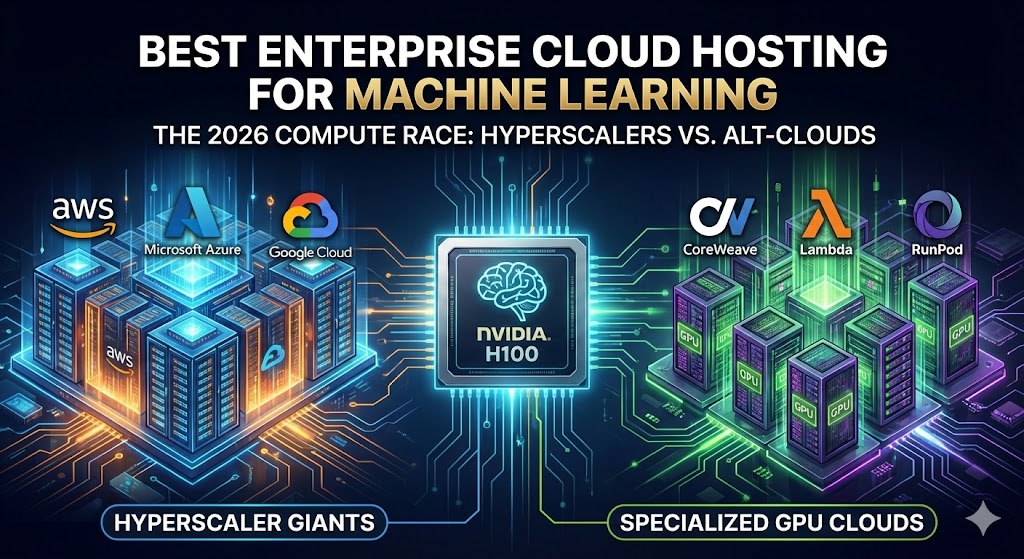

III. The Big Three vs. Specialized GPU Clouds

The cloud market is still dominated by three giants: Amazon Web Services (AWS), Microsoft Azure, and Google Cloud. Together, they control roughly two-thirds of global enterprise cloud infrastructure.

These platforms are powerful. They offer compliance certifications, enterprise-grade security, and massive ecosystems of tools. For regulated industries like finance or healthcare, this matters.

But power comes at a price.

In 2026, renting an 8x H100 GPU instance from a major hyperscaler can cost between $85 and $100 per hour. That means each individual GPU costs roughly $10 to $12 per hour. For continuous model training, that expense multiplies fast.

A startup running 32 GPUs nonstop for 30 days could easily burn through hundreds of thousands of dollars.

Because of these high costs, a new category has exploded in popularity: specialized GPU cloud providers.

Companies like CoreWeave, Lambda Labs, and RunPod focus almost entirely on AI workloads. They strip away unnecessary enterprise layers and optimize for raw GPU performance.

On these platforms, H100 rental pricing can range between $1.50 and $3 per GPU per hour. That is sometimes 70% to 80% cheaper than hyperscalers.

This pricing gap has created massive market disruption. CoreWeave, for example, grew from a niche infrastructure provider into a billion-dollar revenue company in a very short period simply by offering more efficient AI compute access.

For bootstrapped AI startups, this difference can extend runway by months. Instead of raising emergency funding rounds, they can optimize infrastructure.

However, there is a tradeoff.

Specialized GPU clouds may lack some enterprise compliance certifications. They may have fewer data centers globally. And during periods of global GPU shortages, availability can become constrained.

So the decision is not purely about price. It is about risk tolerance and business model alignment.

🔗 AWS EC2 P5 Instances (H100-based)

IV. Key Requirements for Enterprise ML Hosting

When enterprises evaluate AI hosting in 2026, they look beyond just GPU cost.

The first factor is interconnect speed. AI training rarely happens on a single GPU. It happens across dozens or hundreds of GPUs working together. If the networking layer connecting them is slow, expensive GPUs sit idle.

Technologies like InfiniBand and advanced high-speed Ethernet are critical. Without them, distributed training performance collapses.

Second is hardware availability. Having budget approval for H200 or Blackwell GPUs does not guarantee availability. Global supply chains are still tight. Many providers have waiting lists.

Third is data sovereignty. Governments around the world are tightening regulations around data localization. Enterprises cannot always train AI models in foreign data centers.

In India, Europe, and parts of the Middle East, regulations increasingly require that certain types of data remain within national borders. Cloud providers must offer geographically isolated clusters.

This geopolitical dimension adds complexity. An enterprise may train models on a low-cost GPU cloud in one region but deploy production applications in a hyperscaler environment that meets regulatory requirements.

Fourth is reliability and redundancy. Downtime during model training can corrupt datasets and waste compute cycles. Enterprises require service-level agreements that guarantee performance stability.

Finally, cooling and energy efficiency matter more than ever. AI workloads generate massive heat. Data centers are investing heavily in liquid cooling systems. Efficient cooling reduces long-term infrastructure costs, which can indirectly influence pricing models.

🔗 Azure ND H100 v5 Series

V. The Hybrid Future: Extending the Startup Runway

The smartest AI startups in 2026 are not choosing one provider. They are choosing both.

Training large foundational models on specialized GPU clouds dramatically lowers costs. Once models are trained and fine-tuned, companies often migrate inference workloads to hyperscalers.

Why?

Because enterprise customers trust AWS, Azure, and Google Cloud. They are comfortable signing contracts when compliance certifications are in place.

This hybrid strategy allows startups to maximize cost efficiency while maintaining enterprise credibility.

For example, a startup building an AI analytics tool might train its core model on a low-cost GPU cloud, then deploy its customer-facing application on Azure to meet enterprise security standards.

This approach balances speed, cost, and trust.

The broader trend is clear. AI infrastructure decisions are now strategic financial decisions.

In 2026, cloud hosting is no longer background IT plumbing. It determines valuation, funding runway, and competitive advantage.

VI. Conclusion: The Real Cost of AI Power

The AI revolution is not just about smarter algorithms. It is about who controls compute.

With hyperscalers spending over $600 billion on infrastructure and specialized GPU clouds disrupting pricing models, the landscape is changing rapidly.

For startups, every dollar saved on compute extends runway. For enterprises, infrastructure reliability and compliance are non-negotiable.

The future is not hyperscaler versus alt-cloud. It is intelligent allocation between them.

If you are building in AI today, the most important financial question is not just how much funding you raised.

It is this:

How efficiently are you converting GPU hours into product value?

Because in 2026, compute efficiency is the new competitive edge.

And those who understand this early will build faster, scale smarter, and survive longer in the AI infrastructure race

Frequently Asked Questions (FAQ)

1. What is the best cloud hosting platform for machine learning in 2026?

The best cloud hosting platform depends on your stage and compliance needs. Large enterprises typically choose AWS or Azure due to regulatory certifications and global infrastructure. AI-native startups often prefer specialized GPU providers like CoreWeave or Lambda Labs because of significantly lower H100 rental costs. Many companies now use a hybrid model—training on low-cost GPU clouds and deploying production workloads on hyperscalers.

2. How much does it cost to rent an Nvidia H100 GPU in 2026?

In 2026, hyperscalers such as AWS and Azure typically charge around $10–$12 per GPU per hour for H100 instances. Specialized GPU cloud providers may offer H100 rental between $1.50 and $3 per hour depending on availability and contract terms. Pricing varies based on commitment length, region, and supply constraints.

3. What is the difference between H100, H200, and Blackwell GPUs?

The H100 introduced major performance improvements for large language model training. The H200 offers higher memory capacity and bandwidth, which helps reduce bottlenecks in large-context models. Nvidia’s newer Blackwell architecture (B200/B300) is designed for trillion-parameter models and improved energy efficiency, making it better suited for next-generation AI workloads.

4. Is it cheaper to use specialized GPU clouds instead of AWS?

For raw model training, specialized GPU clouds are often significantly cheaper. However, hyperscalers provide stronger enterprise compliance, broader service ecosystems, and global reliability. Startups focused on cost optimization often train on specialized providers and deploy final applications on AWS or Azure for enterprise credibility.

5. What does “Tokens Per Dollar” mean in AI infrastructure?

Tokens Per Dollar (TPS/$) measures how efficiently a cloud provider converts compute cost into AI output. Instead of just measuring speed, AI teams evaluate how many tokens a model can generate per dollar spent. This metric helps startups optimize burn rate and extend runway.

6. Are GPU clouds affected by supply shortages in 2026?

Yes. High demand for AI infrastructure has created periodic GPU shortages. Even if a company has budget approval, certain regions may experience limited availability for H200 or Blackwell GPUs. It is important to confirm hardware allocation before signing long-term contracts.

7. Is data sovereignty important when choosing an AI cloud provider?

Yes. Many governments now require certain types of data to remain within national borders. Enterprises must ensure that their cloud provider offers region-specific deployment options to comply with local data regulations.

8. How can AI startups reduce their cloud burn rate?

AI startups can reduce cloud costs by:

Using spot or reserved pricing models

Testing alternative GPU providers

Optimizing model architecture for efficiency

Shutting down idle instances

Adopting hybrid infrastructure strategies

Even small improvements in compute efficiency can save hundreds of thousands of dollars annually.

9. Should enterprises lock into long-term GPU contracts?

Long-term contracts can significantly reduce hourly rates, but they also increase commitment risk. Enterprises should balance pricing discounts against hardware evolution cycles, as new GPU architectures can quickly outperform older models.

10. What is the safest long-term AI infrastructure strategy?

The safest approach in 2026 is diversification. Avoid depending entirely on a single cloud provider. Maintain flexibility to shift workloads between hyperscalers and specialized GPU clouds as pricing and hardware availability evolve.

People Also Ask (PAA)

Is AWS the best cloud for AI startups in 2026?

AWS is one of the most reliable platforms for AI startups that plan to serve enterprise clients. It offers strong compliance certifications, global data centers, and advanced AI services. However, for early-stage startups focused on reducing GPU training costs, specialized cloud providers may offer significantly lower hourly rates. Many startups combine both options using a hybrid strategy.

Why are H100 GPU prices so high on hyperscalers?

H100 GPUs are in extremely high demand due to generative AI growth. Hyperscalers also bundle infrastructure costs such as networking, storage, compliance certifications, and managed services into their pricing. This results in hourly costs often exceeding $10 per GPU, especially for on-demand instances.

What is the cheapest cloud provider for AI model training?

Specialized GPU cloud providers like CoreWeave, Lambda Labs, and RunPod typically offer lower hourly GPU rental rates compared to hyperscalers. These providers focus specifically on AI workloads and optimize for cost efficiency. However, availability and enterprise compliance features may vary by provider.

Should startups use spot instances for AI training?

Spot instances can reduce costs significantly, but they come with interruption risk. For non-critical experiments or distributed training jobs that can tolerate pauses, spot pricing can extend runway. For production-level workloads or time-sensitive training, dedicated reserved instances are safer.

How much does it cost to train a large language model in 2026?

Training costs vary widely depending on model size and duration. Smaller fine-tuned models may cost tens of thousands of dollars, while large foundational models can cost millions in GPU hours. Efficient architecture design and infrastructure selection greatly influence total expenditure.

Is it better to train on alt-cloud and deploy on AWS?

Yes, this hybrid approach is increasingly common. Startups often train models on lower-cost GPU clouds and deploy production APIs on AWS or Azure to meet enterprise compliance requirements. This strategy balances cost efficiency with customer trust.

Are H200 and Blackwell GPUs worth the higher cost?

For very large models requiring extended context windows and higher memory bandwidth, H200 and Blackwell GPUs provide performance improvements that may justify their premium pricing. For smaller models, the cost difference may not generate proportional performance benefits.

How do AI startups negotiate better cloud pricing?

Startups can negotiate committed-use discounts, request volume-based pricing, compare multiple providers before signing contracts, and demonstrate predictable GPU usage patterns. Cloud providers often offer better rates for long-term agreements or guaranteed workload commitments.