For an artificial intelligence startup in 2026, choosing the right cloud provider is no longer just an IT infrastructure decision; it is a financial decision that directly dictates your startup’s runway.

In the early days of the generative AI boom, securing compute power was a matter of survival, and companies paid whatever the market demanded. However, the market has rapidly matured. The core metric for machine learning teams is no longer just server uptime—it is Tokens Per Second per Dollar (TPS/$).

The supply of top-tier GPUs, specifically the NVIDIA H100, has stabilized, leading to a massive price arbitrage opportunity. Today, AI founders are faced with a critical choice: stick with the legacy “Hyperscaler Giants” or migrate to the highly specialized, aggressive “Alt-Clouds” that are entirely rewiring the economics of machine learning.

Here is the definitive 2026 breakdown of the best enterprise cloud hosting options for your AI workloads, backed by real-time market data.

I. The Hyperscalers: AWS, Google Cloud, and Azure

The “Big Three” have historically dominated the enterprise market. They offer unmatched reliability, extreme data security, and seamless integration with existing corporate tech stacks. However, this premium ecosystem comes at a premium price.

While early adopters in 2023 and 2024 were paying upwards of $7.50 to $11.00 per GPU-hour for an H100, hyperscalers were forced to slash prices by nearly half in mid-2025 to stay competitive.

Current 2026 On-Demand Pricing (Approximate):

Amazon Web Services (AWS): An AWS P5 instance (which houses 8x H100 GPUs) translates to roughly $3.93 per GPU-hour in the US East region.

- Google Cloud GPU pricing and configurations

Google Cloud (GCP): The A3-high instances sit at roughly $3.00 per GPU-hour.

Microsoft Azure: The Standard_NC40ads_H100_v5 instances currently command a premium at roughly $6.98 per GPU-hour in the East US region.

The Verdict for Hyperscalers: If you are a heavily regulated enterprise (like a bank or healthcare provider) that requires strict compliance, custom VPCs, and a massive existing infrastructure ecosystem, the hyperscalers remain the safest choice. But for agile ML startups, these prices will burn through your venture capital fast.

AWS P5 instance pricing and specs

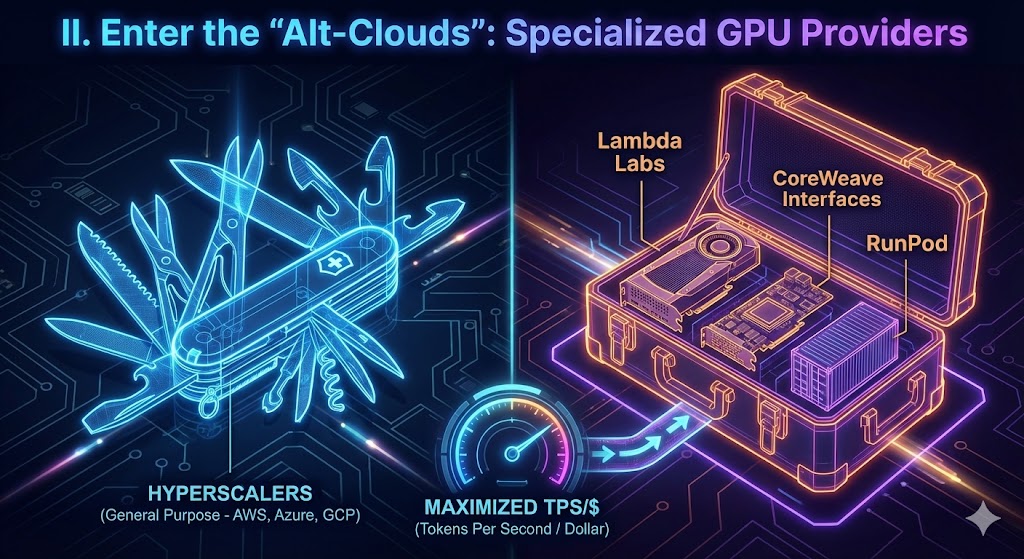

II. Enter the “Alt-Clouds”: Specialized GPU Providers

The most significant shift in the 2026 cloud landscape is the dominance of “Alt-Clouds.” These are specialized hosting providers built from the ground up specifically for high-performance computing (HPC) and AI training. Because they don’t carry the overhead of legacy cloud services, they offer top-tier GPUs at a massive discount.

Current 2026 On-Demand Pricing (Approximate):

Lambda Labs: A massive favorite in the research and academic community, offering on-demand H100s for a flat $2.99 per hour. They are also pushing the frontier by offering next-generation NVIDIA B200 instances for roughly $4.99 per hour.

CoreWeave: Initially famous for crypto-mining, CoreWeave pivoted to AI and offers heavy-duty, interconnected H100 clusters. Pricing can hover around $6.16 per hour for specialized high-speed network configurations.

RunPod: Highly popular for containerized AI workloads and flexible experimentation, RunPod offers H100 PCIe instances for roughly $2.39 per hour, and A100s for as low as $1.64 per hour.

The Verdict for Alt-Clouds: For model fine-tuning, training runs, and production inference where raw compute cost is your primary concern, Tier-1 Alt-Clouds like Lambda Labs and RunPod offer the best balance of reliability and price.

Lambda Labs GPU cloud compute options

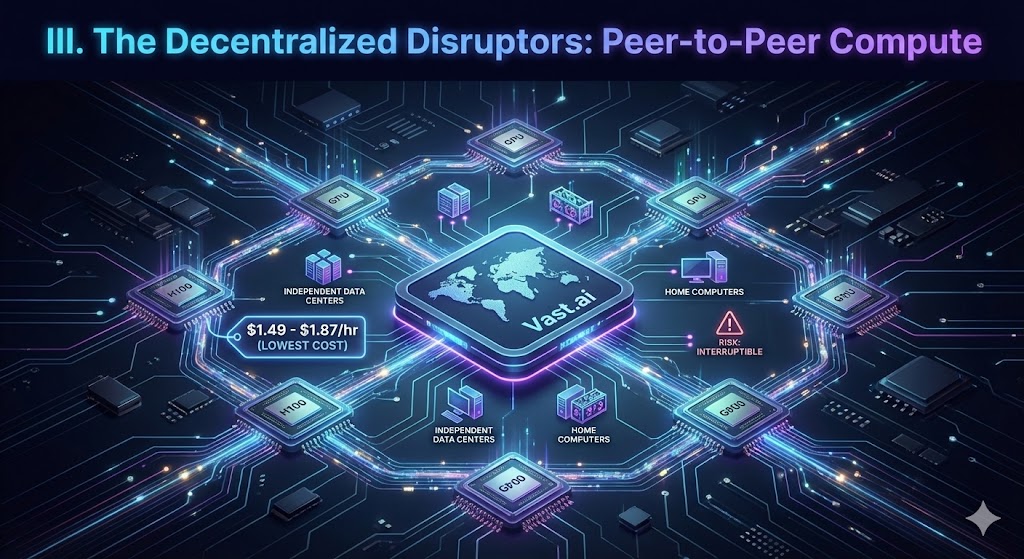

III. The Decentralized Disruptors (Peer-to-Peer Compute)

For teams operating on absolute shoestring budgets or running highly fault-tolerant workloads, peer-to-peer GPU marketplaces have emerged as the ultimate cost-hack.

Platforms like Vast.ai operate similarly to Airbnb but for GPU compute. Independent data centers and individuals rent out their hardware to the network.

The Advantage: You can frequently secure an H100 for as low as $1.49 to $1.87 per hour, or an older A100 for just $1.27 per hour.

The Catch: Because these are interruptible instances hosted by third parties, you sacrifice enterprise-grade security and face the risk of your instance going down without warning. It is perfect for experimentation, but incredibly risky for live customer inference.

IV. The Ultimate Question: Buy vs. Rent in 2026?

With cloud prices dropping, does it make sense to just buy the hardware yourself?

The base price to purchase a single NVIDIA H100 GPU in 2026 sits around $25,000, but can easily exceed $30,000 when factoring in necessary infrastructure like networking and cooling.

If you rent an H100 from an Alt-Cloud at $2.99 per hour, running it 24/7 will cost you roughly $2,152 per month. This means the break-even point is roughly 14 months of continuous, non-stop usage.

Unless your startup has highly predictable, 24/7 compute needs that span well over a year, locking up $200,000+ of your capital into rapidly depreciating hardware (especially with NVIDIA H200s and Blackwell chips hitting the market) is a poor financial strategy. Renting provides the agility your startup needs to pivot.

The Bottom Line

The 2026 compute race is no longer about who has the hardware; it’s about who offers the smartest pricing. If your team is still running standard ML workloads on legacy hyperscalers by default, you are likely overpaying by 40% to 60%. Optimizing your stack to utilize specialized GPU clouds isn’t just an engineering win—it’s a competitive business advantage.

Frequently Asked Questions (FAQ)

Q: What is the difference between Hyperscalers and Alt-Clouds for machine learning?

A: Hyperscalers (like AWS, Google Cloud, and Azure) provide massive, globally integrated ecosystems with strict enterprise security and compliance, but they charge premium prices. Alt-Clouds (like Lambda Labs, CoreWeave, and RunPod) specialize almost entirely in high-performance GPU computing. By stripping away legacy enterprise overhead, Alt-Clouds can offer the exact same NVIDIA chips at a fraction of the cost.

Q: How much does it cost to rent an NVIDIA H100 GPU in 2026?

A: Pricing varies heavily depending on your cloud provider. Legacy hyperscalers generally charge between $3.00 and $7.00 per GPU-hour. However, specialized Alt-Clouds offer significant price arbitrage, frequently providing on-demand H100 access for roughly $2.50 to $3.00 per hour. Decentralized networks can drop prices even further, sometimes below $2.00 per hour.

Q: Should an AI startup buy or rent GPUs for model training?

A: For 90% of AI startups, renting is financially safer. Purchasing an H100 server setup requires upwards of $30,000 in upfront capital and takes roughly 14 months of continuous, 24/7 usage to break even. Renting preserves your venture capital and prevents you from being locked into rapidly depreciating hardware as newer architectures hit the market.

Q: Are decentralized GPU networks safe for enterprise AI workloads?

A: Decentralized or peer-to-peer networks (like Vast.ai) are excellent for cheap, experimental model training or processing non-sensitive datasets. However, because you are renting compute power from independent, third-party data centers, they generally lack the strict regulatory compliance (such as SOC2 or HIPAA) required for live enterprise inference handling sensitive user data.

Q: Which cloud provider offers the best Tokens Per Second per Dollar (TPS/$)?

A: If raw compute cost is your primary metric, Tier-1 Alt-Clouds like Lambda Labs and RunPod consistently offer the best TPS/$. They provide the necessary bandwidth and high-end GPUs (A100s and H100s) at aggressive price points specifically tuned for machine learning efficiency.

People Also Ask (PAA)

Which cloud provider is best for machine learning?

The best provider depends on your infrastructure needs. For strict enterprise security and compliance, AWS or Microsoft Azure are the industry standards. However, for startups focused purely on cost-effective model training, specialized “Alt-Clouds” like Lambda Labs and CoreWeave offer significantly better GPU pricing.

What is the best alternative to AWS for AI?

The best alternatives to AWS for AI workloads are specialized GPU providers known as “Alt-Clouds.” Platforms like Lambda Labs, CoreWeave, and RunPod focus exclusively on high-performance compute, offering access to top-tier NVIDIA H100 and A100 GPUs at up to a 50% discount compared to traditional hyperscalers.

How much does an NVIDIA H100 cost per hour on AWS?

As of 2026, renting an NVIDIA H100 GPU on AWS via a P5 instance costs approximately $3.93 per hour in standard US regions. In contrast, specialized Alt-Clouds often provide the exact same H100 compute power for roughly $2.50 to $2.99 per hour.

Is it cheaper to buy GPUs or use cloud hosting?

For most AI startups, renting cloud GPUs is significantly cheaper and less risky. Purchasing a single NVIDIA H100 setup requires over $30,000 in upfront capital. It takes approximately 14 months of continuous, 24/7 usage to break even, making cloud rentals the smarter choice for preserving venture capital.

Why is cloud computing so expensive for AI?

AI models require massive parallel processing power that only advanced hardware, like NVIDIA GPUs, can provide. The high cost of cloud computing for AI is driven by the sheer hardware expense, immense electricity and cooling requirements, and the global supply-and-demand race to secure top-tier silicon for machine learning.